SparkVSR: Open Source Interactive Video Super-Resolution

Share this post:

SparkVSR: Open Source Interactive Video Super-Resolution

SparkVSR is a research framework from Texas A&M University and YouTube/Google that restores and upscales degraded video by propagating detail from a small number of keyframes supplied by the user across an entire sequence. The code and model weights are publicly available under the Apache 2.0 license, which permits commercial use. The technical paper was published in March 2026.

What SparkVSR Does

Standard video super-resolution models apply a fixed restoration pass to every frame without user input. SparkVSR adds a control layer: users select sparse keyframes and supply high resolution versions of those frames. The model then propagates that detail across the full video, anchoring the output to the user's references rather than relying entirely on blind estimation.

This matters in practice because blind restoration tends to hallucinate texture. When the model has a ground truth keyframe, it locks the output to that content and interpolates consistently through the surrounding frames.

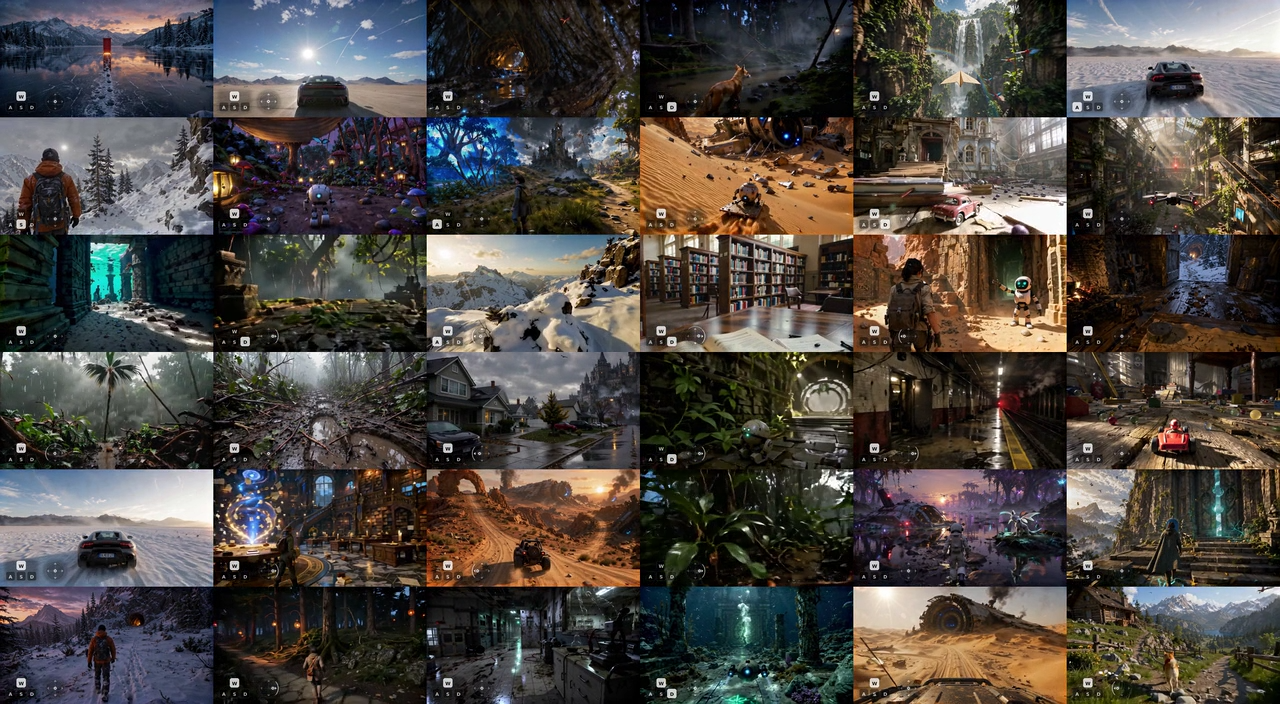

Natural Scene Video SR

Input: Low Resolution

Output: SparkVSR Upscaled

Three Inference Modes

SparkVSR ships with three modes that trade control for ease of use. The first is blind restoration, where no keyframes are provided and the model performs standard video super-resolution without any reference. The second uses an external image restoration API to generate high resolution keyframes from selected frames before propagating them through the video.

The third mode, PiSA-SR, uses an open source image restoration model to restore keyframes locally without any API dependency. This keeps the full pipeline self contained and offline.

Urban Scene Video SR

Input: Low Resolution

Output: SparkVSR Upscaled

Urban footage places particular demands on video super-resolution. Hard edges, repetitive architecture, and mixed lighting conditions expose inconsistencies that blind models often smooth over. The keyframe anchor keeps structural detail consistent frame to frame.

Old Movie Restoration

SparkVSR handles degraded archival footage as a distinct use case. Old film often combines multiple degradation types: grain, scratches, fading, and compression from digitization. The keyframe conditioning allows users to supply a clean reference frame and carry that restoration quality across entire scenes.

Old Movie Restoration

Input: Degraded Film

Output: SparkVSR Restored

Upscaling AI Generated Video

AI video generators available in AI FILMS Studio typically produce output at lower resolutions than cinema delivery requirements. SparkVSR offers a post processing path: generate a low resolution clip, restore a keyframe with any image super-resolution tool, then run SparkVSR to propagate that quality across the full sequence.

On benchmark metrics, the keyframe guided approach outperformed baseline video super-resolution by 24.6% on CLIP-IQA, 21.8% on DOVER, and 5.6% on MUSIQ. The gains are largest in perceptual quality scores, where the keyframe reference steers the model away from generic texture fills.

AIGC Video SR

Input: AI Generated Low Resolution

Output: SparkVSR Upscaled

Architecture: Two Stage Latent and Pixel Pipeline

SparkVSR is built on CogVideoX1.5-5B-I2V, a diffusion transformer video model. The two stage training pipeline first trains in latent space, fusing keyframe priors with the motion from the original low resolution video. The second stage refines at the pixel level, recovering fine texture and edge detail that latent compression obscures.

A reference free guidance mechanism handles frames distant from any keyframe. When the model lacks a nearby reference, it blends blind restoration with keyframe propagation, weighting the balance based on temporal distance. This prevents quality collapse in long sequences where keyframes are sparse.

Open Source and Commercial Use

SparkVSR is released under the Apache 2.0 license. This permits use, modification, and redistribution in commercial products, provided attribution and license notices are included. The model weights are available on Hugging Face in two stages: Stage 1 for intermediate training and the final Stage 2 weights recommended for inference.

Installation requires Python 3.10 or higher, PyTorch 2.5.0 or above, and the Diffusers library. Inference runs on a single GPU. The three model configurations cover no reference blind restoration, API based keyframe generation, and the fully open source PiSA-SR pipeline.

For a speed focused approach to AI video upscaling, the FlashVSR model achieves 17 FPS on HD content using a different distillation based architecture.

Sources

arXiv: SparkVSR: Interactive Video Super-Resolution via Sparse Keyframe Propagation

GitHub: taco-group/SparkVSR

Hugging Face: JiongzeYu/SparkVSR

Project Page: sparkvsr.github.io

Continue Reading

Video & LipSync

- Video Generator

- Text to Video

- Image to Video

- Start-End Frame to Video

- Draw to Video

- Motion Control

- Video Enhancer

- Video Upscaler

- Video to Video LipSync

- Audio to Video LipSync

- Image to Video LipSync

- Video FaceSwap

- Seedance 2

- OpenAI Sora 2

- Kling 3.0

- Kling O1

- Google Veo 3.1

- LTX 2.3

- Kling O1

- Hailuo AI

- Luma Ray

- Kling 3.0 Motion

- Topaz Upscaler

- InfiniteTalk Face Swap

.jpg?w=3840)