Warp-as-History: Camera Control from a Single Training Video

Share this post:

Warp-as-History: Camera Control from a Single Training Video

Researchers at Shanghai Jiao Tong University and Shanghai AI Laboratory published Warp-as-History (arXiv 2605.15182), a method that gives a pretrained video model precise camera trajectory control after fine tuning on a single annotated video. The system scores 62.00 on the WorldScore camera control benchmark, up from 26.42 with no fine tuning at all. That 135% gain comes without modifying the base model's architecture.

Why Camera Control Is Hard

Most existing approaches require either large annotated datasets or costly test time optimization per scene. Training from scratch on thousands of labeled clips is expensive. Optimization at inference time is slow and does not transfer across scenes.

Warp-as-History takes neither path. It feeds the target camera trajectory to the model as visual evidence through the model's own history channel, so no new components are added and no per scene adaptation is needed after the initial LoRA fine tune.

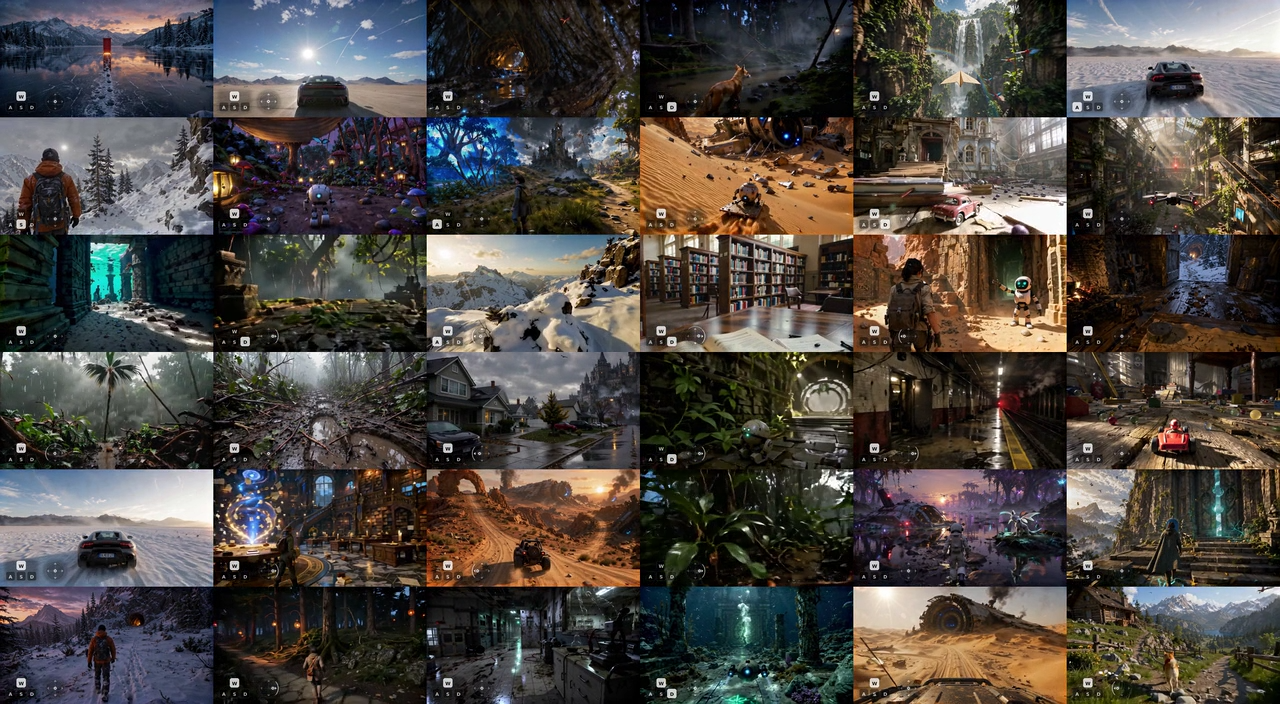

Stage scene, Warp-as-History camera trajectory control

How It Works

The method operates in four steps.

Camera warped pseudo-history. Source frames are rendered from the target camera's viewpoint using geometric warping. Those warped frames feed into the model's history stream, replacing actual past frames with versions of the scene rendered from the target viewpoint.

Target frame alignment. Warped tokens receive the same temporal positional embeddings as the frames being denoised. The model interprets them as aligned context because they arrive through the exact channel it already expects history from.

Visible token selection. Warped regions covering occluded or geometrically invalid areas are masked out. The model fills those gaps during generation, completing newly visible regions without conditioning on bad geometry.

Lightweight LoRA fine tuning. A single LoRA adaptation runs for roughly 1,000 iterations on one annotated reference video, taking about one hour on a single GPU. This step stabilizes behavior across unseen scenes without any per scene adaptation at inference time.

The system builds on Helios, the 14B autoregressive diffusion model from Peking University, ByteDance, and Canva. Pi3X handles pose estimation. No changes are made to the Helios architecture.

Beach scene, camera trajectory following

License and Commercial Use

The code is released under Apache 2.0, which permits commercial use, modification, and redistribution with attribution. The provided LoRA weights carry CC BY-NC 4.0, which restricts them to projects without commercial revenue.

For production pipelines, the repository includes training scripts. Teams can train their own LoRA weights on their own annotated footage. A custom LoRA trained on your own data is not covered by the CC BY-NC restriction on the provided weights, so commercial deployment is viable with that extra training step.

Performance on WorldScore

| Method | Camera Control Score | Training Data |

|---|---|---|

| Warp-as-History, frozen model (zero shot) | 26.42 | None |

| Warp-as-History, one video LoRA | 62.00 | 1 annotated video |

The paper benchmarks the method against CameraCtrl, MotionCtrl, and CogVideoX. Warp-as-History matches or exceeds all three on FID, FVD, LPIPS, and DINO metrics while requiring far less training data. The 135% gain in camera control from the zero shot baseline to the single video fine tune is the most striking result. The frozen model already follows camera trajectories to a degree, and one hour of training nearly triples that accuracy.

For a comparison approach that removes depth estimation entirely, InfCam from KAIST uses infinite homography warping and achieves 25% better camera accuracy over reprojection based methods. For reference based motion cloning from existing footage, CamCloneMaster provides a workflow for extracting camera motion from reference videos and applying it to generated content.

Train scene

Underground lab scene

Local Installation

Requirements:

- Python 3.10+

- PyTorch 2.5.1+ with CUDA (CUDA 12.4 recommended)

- GPU with 24 GB+ VRAM (Helios is a 14B model)

- FlashAttention 2.7.4+ (optional, speeds up inference)

Step 1. Clone the repository with submodules:

git clone https://github.com/yyfz/Warp-as-History --recursive

cd Warp-as-History

Step 2. Create a Conda environment:

conda create -n warp-as-history python=3.10

conda activate warp-as-history

Step 3. Install PyTorch for your CUDA version:

# Example for CUDA 12.4, adjust cu124 to match your install

pip install torch torchvision --index-url https://download.pytorch.org/whl/cu124

Step 4. Install remaining dependencies:

pip install -r requirements.txt

Step 5. Optional. FlashAttention for faster inference:

pip install flash-attn --no-build-isolation

Step 6. Download pretrained models from Hugging Face Hub:

Three downloads are required: Helios-Distilled (the base generation model), Pi3X (pose estimation), and the Warp-as-History LoRA weights (CC BY-NC 4.0). Model paths are configured in the inference config files after download.

Run the Gradio web interface:

python app.py

Run batch inference from a CSV file:

python inference.py --config configs/inference.yaml

The CSV format takes one row per generation: image path, text prompt, and camera pose parameters. The web interface handles all of that interactively for quick tests.

Training a Custom LoRA (Required for Commercial Use)

The training pipeline is included in the repository. Provide a single annotated video with camera poses and run:

python train.py --config configs/train.yaml --video your_annotated_video.mp4

Training runs for roughly 1,000 iterations and completes in about one hour on a single 24 GB GPU. The resulting LoRA weights belong to you and carry no CC BY-NC restriction.

What This Means for AI Filmmakers

The single video LoRA requirement drops the training data barrier from thousands of annotated clips to one. Any team with access to a single annotated reference video and a modern GPU can adapt the model to their own scenes without cloud compute.

The licensing split is the key variable for production use. The Apache 2.0 code is commercially clear. For paid projects, train your own LoRA, which the repo fully supports. For teams who prefer cloud based text-to-video and image-to-video generation without the local GPU requirement, AI FILMS Studio runs the latest models in a browser workspace.

Sources

arXiv: arXiv:2605.15182 GitHub: yyfz/Warp-as-History Project Page: yyfz.github.io/warp-as-history

Continue Reading

Video & LipSync

- Video Generator

- Text to Video

- Image to Video

- Start-End Frame to Video

- Draw to Video

- Motion Control

- Video Enhancer

- Video Upscaler

- Video to Video LipSync

- Audio to Video LipSync

- Image to Video LipSync

- Video FaceSwap

- Seedance 2

- OpenAI Sora 2

- Kling 3.0

- Kling O1

- Google Veo 3.1

- LTX 2.3

- Kling O1

- Hailuo AI

- Luma Ray

- Kling 3.0 Motion

- Topaz Upscaler

- InfiniteTalk Face Swap

.jpg?w=3840)