Nano Banana 2 Has Memorized Her Face. She Can't Opt Out.

Share this post:

Nano Banana 2 Has Memorized Her Face. She Can't Opt Out.

In early 2026, AI marketer Dan Kieft shared a text prompt with his public audience. No uploaded photos. No image references. Just a structured description of a woman's appearance, pose, and jewelry. Thousands of users ran it and received what looked like the same person every time.

That person was widely identified by the online community as Alix Earle, one of the most followed lifestyle and beauty creators on YouTube and TikTok. Earle's specific gold cross necklace, bone structure, and signature hair texture appeared in every output. The prompt had not referenced her by name. Nano Banana 2, the consumer name for Google's Gemini 3.1 Flash Image model, had learned her face from watching her videos.

The CNBC Investigation

On June 19, 2025, CNBC reporter Zach Vallese published an investigation confirming that Google had used its library of roughly 20 billion YouTube videos to train Gemini 3 and the Veo 3 video generator. Multiple leading creators and IP professionals told CNBC they had no idea their uploads were being used as training data.

Google confirmed the practice to CNBC and to subsequent outlets, stating it used a "subset" of YouTube content and that doing so fell within its existing Terms of Service. What Google did not offer was an opt out.

How a Creator Becomes a Prompt

When a model trains on hundreds of hours of footage from the same person, it does not simply learn general human appearance. It learns that person's specific biometrics: jaw geometry, hair texture, posture patterns, and recurring visual style.

That depth of training makes a creator a "primitive" inside the model. A baseline concept so well established that a purely descriptive text prompt can invoke her face without any image reference. Luke Arrigoni, CEO of Loti, describes these outputs as "synthetic facsimiles" that carry the human original's likeness without that person's consent or any commercial benefit to them.

The Kieft prompt required no image reference because Earle had already become a baseline concept inside the neural network. A description alone was enough to invoke her face.

No Way to Opt Out

Google introduced tools that allow YouTube creators to block third party companies such as Nvidia or Apple from scraping their content for AI training. But it explicitly denied creators the ability to opt out of Google's own internal AI training pipeline.

TechSpot, reporting on the CNBC story, confirmed Google offers no opt out mechanism for its internal use while continuing to confirm the practice. The structural gap is what Kieft's demonstration exposed. His defense, that he "only used words," is technically accurate. That accuracy is the problem.

The EU Investigation

In December 2025, the European Commission opened a formal antitrust investigation into Google's use of YouTube content and publisher material for AI training. EU Perspectives reported the probe focuses on whether creators had any meaningful ability to refuse data use and whether they received compensation.

Le Monde noted the Commission is examining whether Google used online content to train AI services without appropriate compensation or any real possibility to refuse. An earlier EU Parliament study on generative AI and copyright had already flagged the market substitution risk: when a model trained on a creator's output can replicate that creator, it becomes a direct commercial competitor to them.

The European Parliament voted in March 2026 to require AI companies to disclose and compensate rightsholders for content used in training. That vote and its implications for creators working in the EU are covered in our analysis of what EU lawmakers decided on copyright in the age of AI.

The Economic Dimension

The Kieft incident crystallized an economic argument that creators have raised since AI image generation went mainstream. Marketers are now using consistent character generation to build synthetic influencers. Those influencers compete directly with the human creators whose training data made the models capable of generating them.

Earle's public response focused on the specific: her gold cross pendant, her jewelry aesthetic, her visual style had been turned into a free resource for competitors. The personal detail matters because it names the mechanism. The model did not learn "a woman." It learned her.

What the Model Is

Nano Banana 2 is Gemini 3.1 Flash Image. According to The Decoder, it delivers roughly 95 percent of Nano Banana Pro's capabilities and integrates Google Search grounding for real world visual context. Google DeepMind's Paige Bailey has described the Nano Banana line as capable of "world knowledge" grounding, meaning outputs draw on what the model has absorbed about real people, places, and objects.

The grounding includes 20 billion YouTube videos. Those videos include faces. For an introduction to how the model handles prompts in practice, see our overview of the Nano Banana prompt library.

Where the Law Stands

California's digital replica laws, AB 2602 and AB 1836, took effect January 1, 2026. They require explicit consent and fair compensation when a performer's likeness is replicated commercially. SAG-AFTRA is also pushing to treat AI performer usage as a taxable event within production budgets.

But those frameworks address deliberate commercial replication. They do not yet address the training data mechanism that makes a purely descriptive text prompt sufficient to generate a specific person's face. That gap, between what the law covers and what the technology does, is what this controversy exposed.

YouTube has since built a separate system operating at the distribution end of this chain. In April 2026, the platform extended its AI likeness detection tool to talent agency clients, letting enrolled celebrities flag AI generated replicas in newly uploaded videos. The mechanics, enrollment requirements, and limits of that tool are documented in our analysis of YouTube's deepfake detection expansion to Hollywood talent agencies.

For a full breakdown of what the California laws require now that they are enforceable, including consent language and exemptions, see our guide to California's Digital Replica Law in 2026.

The Nano Banana controversy is not a glitch. It is a live demonstration of what happens when a model trains on 20 billion videos belonging to people who had no say. Until the opt out mechanisms that regulators are debating become enforceable, every YouTube creator is inside the training set.

To see how Nano Banana Pro 2 handles every parameter in AI FILMS Studio, from model selection and reasoning levels to web search grounding and the Node Graph Editor, follow our step by step tutorial.

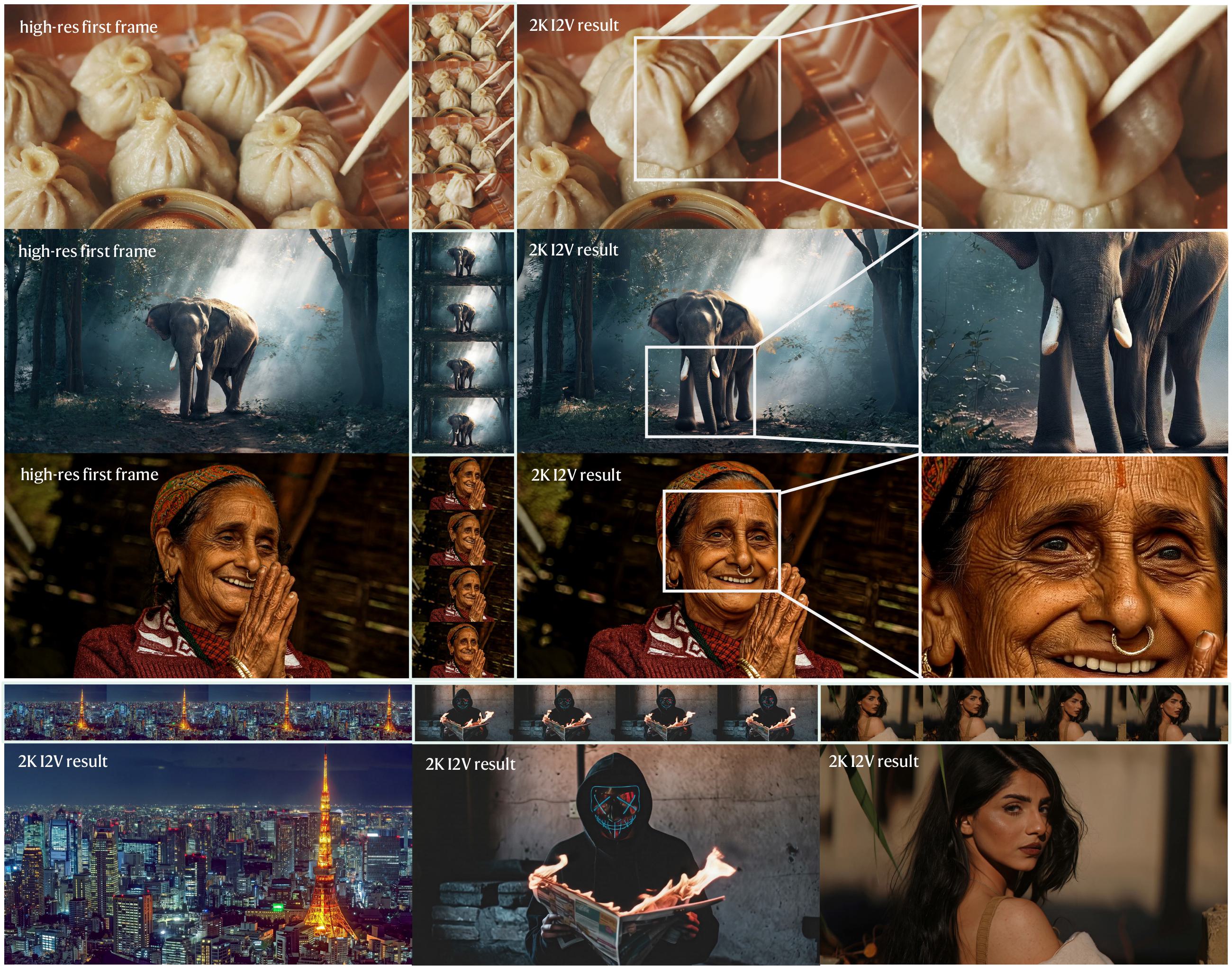

The image-to-image mode lets you edit and transform reference images with the same natural language instructions:

Try generating original AI characters through AI FILMS Studio.

Sources

CNBC | TechSpot | The Decoder | EU Perspectives | Le Monde | Everyday AI

Continue Reading

Video & LipSync

- Video Generator

- Text to Video

- Image to Video

- Start-End Frame to Video

- Draw to Video

- Motion Control

- Video Enhancer

- Video Upscaler

- Video to Video LipSync

- Audio to Video LipSync

- Image to Video LipSync

- Video FaceSwap

- Seedance 2

- OpenAI Sora 2

- Kling 3.0

- Kling O1

- Google Veo 3.1

- LTX 2.3

- Kling O1

- Hailuo AI

- Luma Ray

- Kling 3.0 Motion

- Topaz Upscaler

- InfiniteTalk Face Swap